Executive Summary

Andrej Karpathy's four structural failure patterns of LLM coding agents — unchecked assumptions, over-complication, orthogonal damage, and unvalidated execution — published in January 2026, are not simply a code quality issue. According to Lightrun 2026, 43% of AI-generated code requires debugging in production, and Cortex reported a 23.5% increase in incidents/PRs after agent adoption. When these defects pass through data pipelines, they transform into schema drift, silent filter changes, and Phantom Success — contaminating training data itself.

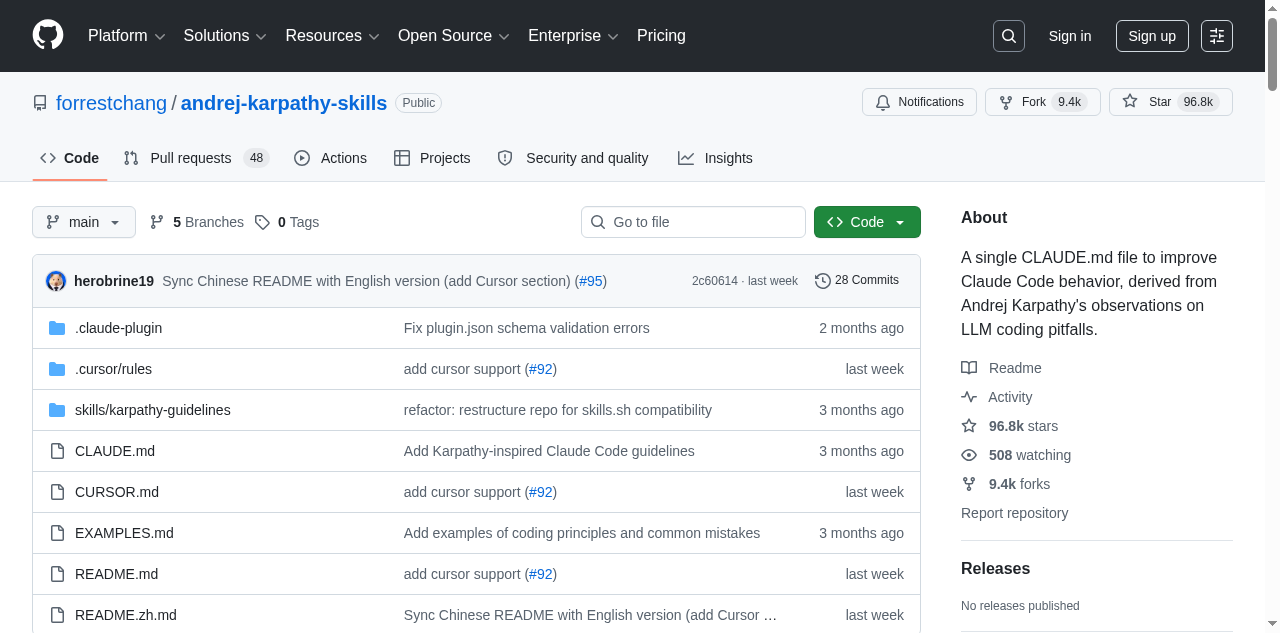

The andrej-karpathy-skills repository, where Forrest Chang distilled Karpathy's observations into a 70-line CLAUDE.md file, has surpassed 95.9k stars — proving the need for a "code gate." Yet the "data gate" that validates the data produced by that code remains an unaddressed gap.

This report traces how agent code failures contaminate data pipelines, and argues why the dual-defense architecture of CLAUDE.md (code gate) + DataClinic (data gate) constitutes an expanded definition of AI-Ready Data. It also addresses the ETH Zurich finding that context files deliver only a +4% improvement in benchmark task resolution.

Karpathy's Warning — Four Structural Failures

Having delegated 80% of his coding work to agents since December 2025, Karpathy shared four structural pitfalls from direct experience on X (formerly Twitter) in January 2026. These are not mere inconveniences. arXiv 2512.07497 (Roig), analyzing 900 agent execution traces, and arXiv 2511.04355 (Sharifloo), studying 6 LLMs and 114 consistently failing tasks, confirmed the same patterns academically. Stack Overflow 2025 shows 84% of developers use AI tools, yet trust has dropped from 40% to 29%.

"The models make wrong assumptions on your behalf and just run along with them without checking."

— Andrej Karpathy, 2026-01-26

Each of Karpathy's four warnings maps to a concrete contamination path in data pipelines. The table below provides a 1:1 mapping between Karpathy's observations, Roig's academic classification, the resulting code defect, and the data contamination outcome.

| Karpathy Pitfall | Academic Pattern (Roig) | Code Defect | Data Contamination |

|---|---|---|---|

| Unchecked Assumptions | Silent Wrong Assumptions | NULL handling errors, type loss | Schema drift, distribution skew |

| Over-complication | Over-complication | Excessive memory, OOM | Batch data loss |

| Orthogonal Changes | Orthogonal Damage | Side-effect filter mutation | Silent class drop |

| Unvalidated Execution | Goal Misalignment | Empty result propagation | Phantom Success |

"They really like to overcomplicate code and APIs, bloat abstractions, and implement a bloated construction over 1000 lines when 100 would do."

— Andrej Karpathy, 2026-01-26

As Karpathy himself acknowledged, agents "implement a bloated construction over 1,000 lines when 100 would do." This over-complication pattern is not merely a messy-code problem. When unnecessary abstraction layers accumulate in pipeline code, memory usage spikes and batch job OOMs cause data loss. arXiv 2512.07497 concluded that "model scale alone cannot predict agentic robustness."

The most dangerous pattern is "orthogonal changes." In Karpathy's words, agents "change or delete comments and code that it doesn't fully understand as a side effect." When this side effect silently alters a filter condition in a data pipeline, an entire class of data disappears — a silent class drop that leaves no errors in the logs.

The Power of One CLAUDE.md — Anatomy of 95.9k Stars

Forrest Chang distilled Karpathy's observations into a single markdown file. The andrej-karpathy-skills repository consists of a 20KB single file, and since its creation on January 27, 2026, it hit a single-day peak of 5,828 stars on April 13 (global #2). As of April 28, it has reached 95.9k stars and 9.3k forks — one of the fastest-growing single-file repositories in GitHub history.

The four core principles this file codifies are:

- 1. Think Before Coding — Verify assumptions and plan before writing any code

- 2. Simplicity First — Don't write 1,000 lines when 100 will do

- 3. Surgical Changes — Don't touch unrelated code

- 4. Goal-Driven Execution — Validate and confirm results before calling it done

This is not mere prompt engineering. arXiv 2505.14810 shows performance begins degrading beyond 150 instructions. A one-off prompt disappears when the context window resets, but CLAUDE.md resides at the project root and is automatically loaded in every session. arXiv 2406.12513 confirmed that this In-Context Learning (ICL) pattern is also effective for security improvements. Compressing rules to 4–5 essentials is a scientifically grounded design choice.

CLAUDE.md is specific to Anthropic's Claude Code, but the approach has already spread across the broader ecosystem. AGENTS.md, under Linux Foundation governance, has been adopted in over 60,000 projects, and each platform — Cursor's .cursor/rules, Windsurf's .windsurfrules — supports similar behavior-correction files.

| File | Platform | Governance | Notes |

|---|---|---|---|

| CLAUDE.md | Claude Code | Anthropic | @imports, Skills Marketplace (658+) |

| AGENTS.md | Universal (14+ platforms) | Linux Foundation | De facto standard, 60,000+ projects |

| .cursor/rules | Cursor | Anysphere | Local rules file, IDE integration |

arXiv 2511.10271 highlights "the instability of prompt-based non-functional quality optimization," arguing for persistent behavior correction rather than one-off prompts. CLAUDE.md is the simplest possible implementation of this persistent context — and the starting point for a new paradigm called "context engineering."

The Real Risk for Data Teams — From Code Failures to Pipeline Contamination

Code failure is not the end — it's the beginning. When agent-written defective code enters production, contamination propagates across every stage of the data pipeline. And AI-assisted engineers ship 60% more code to production than before. The probability of code defects has risen, and so has the shipping velocity.

The accumulated evidence leaves no doubt: the quality risk of agentic coding is not hypothetical.

43%

Require debugging in production

Lightrun 2026

+23.5%

Incidents/PRs increase

Cortex 2026

1.7x

AI code issue rate

CodeRabbit 2025

45%

Security test failures

Veracode 2025

According to Lightrun 2026 (200 SRE/DevOps respondents), 43% of AI-generated code requires production debugging, and 88% redeploy 2–3 times per single fix. Cortex 2026 reported a 30% increase in change failure rate after agent adoption. CodeRabbit's analysis of 470 PRs found AI code has 1.7x the issue rate of human code, with security vulnerabilities 2.74x higher.

Here is what data teams often miss. The statistics above measure code defects themselves — not what those defects do to data. The moment a code defect passes through the pipeline and lands in the data, the problem goes silent. No errors in the logs, tests pass, and the pipeline looks healthy.

Place these numbers in the context of data pipelines and the meaning shifts. "Unchecked assumptions" causing type loss skew embedding distributions; "orthogonal changes" dropping specific classes bias model decision boundaries. The Model Collapse research from Nature 2024 (Shumailov) proved that such contamination, through iterative training, leads to "irreversible defects — permanent loss of the original distribution's tail."

When this contamination reaches training data, how can it be detected? Code review looks at code. But who looks at the quality of the data that code produces? DataClinic's Level 2 density analysis and outlier detection are tools capable of catching this type of distribution distortion. If an agent silently changed a filter condition as a side effect and an entire class of data disappeared, DataClinic's per-class distribution diagnostics can identify that symptom.

Gartner estimates the average annual cost of bad data to enterprises at $12.9–15M, and projects that 60% of AI projects will be abandoned by 2026 due to the absence of AI-ready data. arXiv 2511.10271 warns that "generated code can accelerate the accumulation of technical debt."

Dual-Defense Architecture — CLAUDE.md + DataClinic

CLAUDE.md serves as the first line of defense (code gate), correcting agent behavior at the moment code is written. However, ETH Zurich (arXiv 2602.11988) experiments found that human-authored context files yielded only +4% improvement in benchmark task resolution, while LLM-auto-generated context files actually showed a -3% negative effect. The code gate alone is not enough.

After defects that slip past the code gate contaminate data, a second layer of defense at the data level (data gate) becomes necessary. DataClinic is the post-hoc diagnostic engine positioned to fill this role.

Dual-Defense Flow

Code Gate

Data Gate

Concretely, Karpathy's four pitfalls map to DataClinic's diagnostic domains as follows. Distribution distortion from "unchecked assumptions" is detectable at DataClinic Level 1 (basic statistics). Class drops from "orthogonal changes" can be identified as density anomalies at Level 2 (DataLens neural network). When OOM caused by "over-complication" causes batch job failures, they appear as per-class sample count imbalances — also within DataClinic's diagnostic scope.

Cortex 2026 reports that only 32% of companies have established formal governance policies for agentic coding, while 27% have no policy at all. Gartner reports that 96% of enterprises plan to adopt data observability within one year. Combining the code gate (behavior-correction files) with the data gate (post-hoc diagnostics) is the natural convergence of these two trends.

Google is building Agent Identity/Gateway/Anomaly Detection, Amazon is developing Bedrock AgentCore, and Microsoft is establishing an Enterprise Code of Conduct — all at the platform level. Yet no major player yet offers data-level tooling to diagnose "how agent code broke the data." This is precisely why the second wall of the dual-defense architecture — the data gate — is needed.

Limits and Open Questions

The causal chain presented in this report is based on logical inference — empirical research measuring the direct impact of CLAUDE.md adoption on data pipeline quality does not yet exist. The limitations must be stated honestly.

First, the ETH Zurich study's +4% figure suggests context file effects may be modest. In practice, CLAUDE.md's value lies not in benchmark scores but in non-functional quality areas: reduced code complexity, side-effect prevention, and consistent rule application. However, quantitative evidence for these benefits is still accumulating. Veracode 2025 notes that "models have gotten better at writing functional code, but not at writing secure code."

Second, there are physical limits to scaling behavior-correction files. arXiv 2505.14810 shows instruction-following quality consistently degrades beyond 150 instructions. With 67% of practitioners maintaining duplicate configurations across multiple files, rule management itself risks becoming a new form of technical debt.

Third, the finding that removing data leakage from SWE-bench+ caused resolution rates to drop from 3.97% to 0.55% — a 7x collapse — raises the possibility that agentic coding capability is systematically overstated on benchmarks. The report that 88% of AI agent projects fail before reaching production points to the same concern.

The open questions remain for future research and practice to answer. Is the optimal number of CLAUDE.md rules 4 or 50? How should the feedback loop between the code gate and data gate be implemented? What is the safety threshold for agent code errors in Physical AI (autonomous vehicles, robotics) pipelines? Will big-tech platform-level governance eventually replace the markdown-file-based approach?

Why Pebblous Cares About This Topic

This report's causal chain — from agent code failure to data contamination to model performance degradation — directly intersects with Pebblous's core business domain. DataClinic is an engine for diagnosing distribution distortion, class imbalance, and outliers in data, and these diagnostic categories precisely match the symptoms that Karpathy's four pitfalls leave at the data layer. When "unchecked assumptions" cause type loss and skew embedding distributions, DataClinic Level 1 detects it; when "orthogonal changes" silently remove a specific class, Level 2 DataLens can identify it as a density anomaly.

From a data quality perspective, the Model Collapse mechanism proven by Nature 2024 (Shumailov) elevates the severity of this problem. When AI agent-generated defective code transforms data, and that data is used again for model training, the tail of the original distribution is permanently lost. If the feedback loop of code correction (preventive) and data diagnostics (corrective) is the practical path to preventing model collapse, DataClinic is positioned to handle the latter half of that loop.

On the enterprise practice side, the reality that AI-assisted engineers ship 60% more code to production while 43% requires debugging is rapidly expanding the contamination surface area of data pipelines. Data team leads and CDOs adopting agentic coding can consider establishing behavior-correction files as a code-level policy — and pairing this with data-level post-hoc diagnostics like DataClinic.

As competitive content analysis confirms, "how to use CLAUDE.md" (developer perspective) and "data quality tool comparisons" (data perspective) exist independently — but analysis that causally connects these two worlds is nearly absent. The questions to explore ahead are clear: How can DataGreenhouse's Observation Layer automatically collect data quality signals after agent code changes, and how can the Action Layer implement a loop to correct contaminated data? When the definition of AI-Ready Data expands beyond data collection and cleaning to encompass "pipeline code quality," what standards and measurement methods apply?

References

Academic Papers

- Kharma et al. (2025). "Security and Quality in LLM-Generated Code." arXiv: 2502.01853

- Sun et al. (2025). "Quality Assurance of LLM-generated Code." arXiv: 2511.10271

- Sharifloo et al. (2025). "Where Do LLMs Still Struggle?" arXiv: 2511.04355

- Mohsin et al. (2024). "Can We Trust LLM Generated Code?" arXiv: 2406.12513

- Jimenez et al. (2024). "SWE-bench." ICLR 2024. arXiv: 2310.06770

- "SWE-Bench+." (2024). arXiv: 2410.06992

- Shumailov et al. (2024). "The Curse of Recursion." Nature 2024. arXiv: 2305.17493

- Roig (2025). "How Do LLMs Fail In Agentic Scenarios?" arXiv: 2512.07497

- Vasilopoulos (2026). "Codified Context." arXiv: 2602.20478

- Schreiber & Tippe (2025). "Security Vulnerabilities in AI-Generated Code." arXiv: 2510.26103

- "Scaling Reasoning, Losing Control." (2025). arXiv: 2505.14810

- Gloaguen et al. (2026). "Evaluating AGENTS.md." ETH Zurich. arXiv: 2602.11988

Industry Reports

- Lightrun. (2026). State of AI-Powered Engineering Report

- CodeRabbit. (2025). State of AI vs Human Code Generation Report

- Veracode. (2025). GenAI Code Security Report

- Cortex. (2026). Engineering Benchmark Report

- Stack Overflow. (2025). Developer Survey.

Primary Sources

- Karpathy, A. X post (2026-01-26). Original link

- forrestchang/andrej-karpathy-skills. GitHub Repository

- Anthropic. CLAUDE.md Official Documentation.

- Linux Foundation. AGENTS.md Standard.